How we built Pusher-JS 2.0 – part 1 – Basics

A walkthrough on how we upgrade our connection strategy for the Pusher-js 2.0.0 library.

Introduction

Last month we released pusher-js 2.0 which represents a few months worth of development effort. So, we wanted to share some details about the work involved and the changes included in this latest release. As the release announcement states, our main goal with pusher-js 2.0 was to improve the connectivity, so in this blog post we’ll focus on explaining how the new client tries to establish the best possible connection.

Why it’s not that simple

There are surprisingly many things that can go wrong when trying to establish a real-time connection:

- a browser might not support native WebSockets,

- Flash might not be installed or can just be blocked,

- firewalls can block specific ports (e.g. 843 for Flash),

- some proxies might not support WebSocket protocol,

- other proxies simply don’t like persistent connections and terminate them.

All these problems manifest themselves in different ways – some are easy to resolve, some just take a significant amount of time to get noticed.

Browser-related issues

These are the simplest to solve just by running a few basic run-time checks. Checking for WebSocket/Flash support is easy, figuring out the best HTTP fallback is slightly more difficult. We’ll not delve into details here.

Dealing with unsuccessful connection attempts

The first of our significant problems is handling connections that didn’t succeed. Unfortunately, clients can’t really figure out what is happening to the connection:

- is the network latency just bad?

- is the client behind a firewall/proxy that blocks a specific protocol?

- are only WebSockets affected? will HTTP fallbacks work?

There are a few ways for the client to act in this situation:

- Wait until the connection fails and then try a HTTP fallback,

- Launch WebSockets and HTTP transports simultaneously,

- Start with WebSockets, wait a moment and then try an HTTP fallback in parallel.

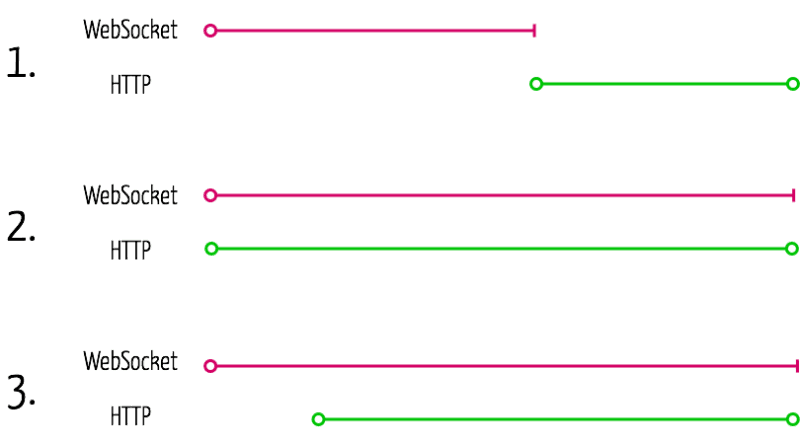

First method is far from perfect – if WebSockets fail, we waste a significant amount of time idling. Second option might sound great, but handling unnecessary HTTP traffic would raise our infrastructure costs substantially.

We decided to go with option #3 – we assume that WebSockets will connect quickly. If that doesn’t happen, we try one of the HTTP fallbacks (streaming or polling using XHR, iframes or JSONP) and wait for one of the connections to succeed. Currently, we don’t support switching transports on-the-fly – so first successful connection wins – but we’ll add that feature in the future.

We still need to answer one question: how long should the delay be? Short delays improve connecting time in the case when a client ends up with an HTTP transport, but increase the load on our servers. The longer the delay, the closer to solution #1 we get.

We collected a significant amount of data from clients during the beta-testing phase and found out that the 2 seconds delay provided the best cost-to-latency compromise. We’ll cover this in more details in the third post in this series.

Unexpected closures

Some clients run behind old or restrictive proxies, which tend to terminate long-running connections. Of course, reconnecting every several seconds leads to bad user experience, so we want to disable problematic transports.

For each session, Pusher JS library assigns gives each WebSocket-based transport two chances to connect; 2 lives. Every time the connection is closed uncleanly, we take one life away from the transport. When the number of lives drops to zero, the transport is considered dead and will not be used in future attempts.

Transport caching

All these strategies are needed when we connect for the first time. But, can we learn anything from the first connection attempt? Do we need to go through all these steps on subsequent attempts?

Fortunately, we can cache the last connected transport’s details in browser’s local storage between page views. Before every connection attempt Pusher will check the cache and figure out whether it needs to run the complete strategy. This way we can jump straight to a transport that is most likely to work and connect quickly.

We invalidate the cache after a few minutes to make sure it’s not stale, since client’s environment might change.

More to come

We still have a few things we want to tell you about, such as the representation of strategies inside pusher-js and how we used our metrics to develop the library. We’ll dig deeper in future articles.